Top Visual Regressions that You Can Spot with and without Automated Testing

Visual bugs are typically considered easy to spot. You can often see what’s missing or what’s amiss on a web page.

A photo that’s too much to the left, the wrong fonts, a missing button -- these are things that you can spot at a simple glance. But not all visual bugs are that easy to catch, especially not with the naked (or untrained eye).

This is where automated visual regression tools like Diffy come into play. They can help you spot elusive visual bugs easily, even if you have to test hundreds of pages.

Let’s take a quick look at some of the most common visual bugs.

Top Visual Regressions -- What You Can Spot without Testing Solutions and What You Can’t

Since we test hundreds and hundreds of websites every day through Diffy, we can easily say that we’ve seen our fair share of visual bugs. We noticed a few patterns of where things typically break and, consequently, where QA testers or website administrators could benefit the most from automated testing.

Easy-to-Spot Visual Bugs

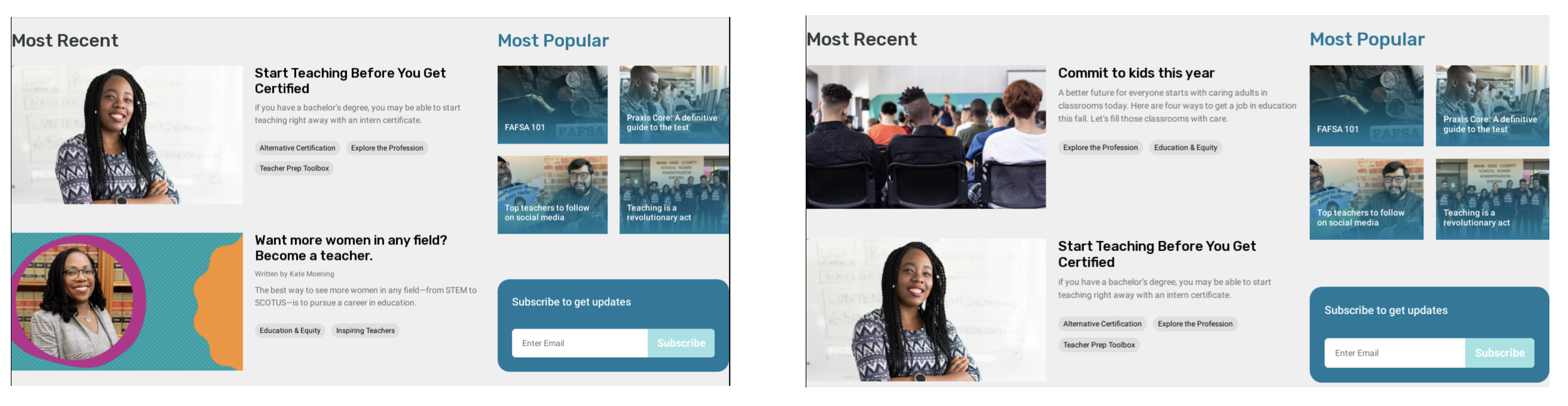

Content Updates or Changes

When something changes with your web copy or content, you can easily spot it at a glance. You’ll see that the text is different or that the various text blocks don’t look quite like they used to.

Of course, a visual regression testing solution will spot this, as well. If you use such a tool, a good practice is to synchronize the content between the environments -- in staging and production, for instance. This way, the tool can ignore the changes and you can avoid false positives.

Layout Issues

Layout issues are also fairly easy to spot. If you uploaded an image that is too small or if your code is treating the last item of the block differently, you will see it right away.

Integration with External APIs

This bug is not that easy to see with the naked eye, but it’s still possible to catch it without an automated testing solution. Your API key may be missing or something else may be wrong with the integration module.

Either way, you will be able to spot the error if you test the page manually.

There are also a few bugs that you will rarely catch without automated testing tools. Let’s look at them.

Visual Bugs that Are Hard to Catch without an Automated Testing Solution

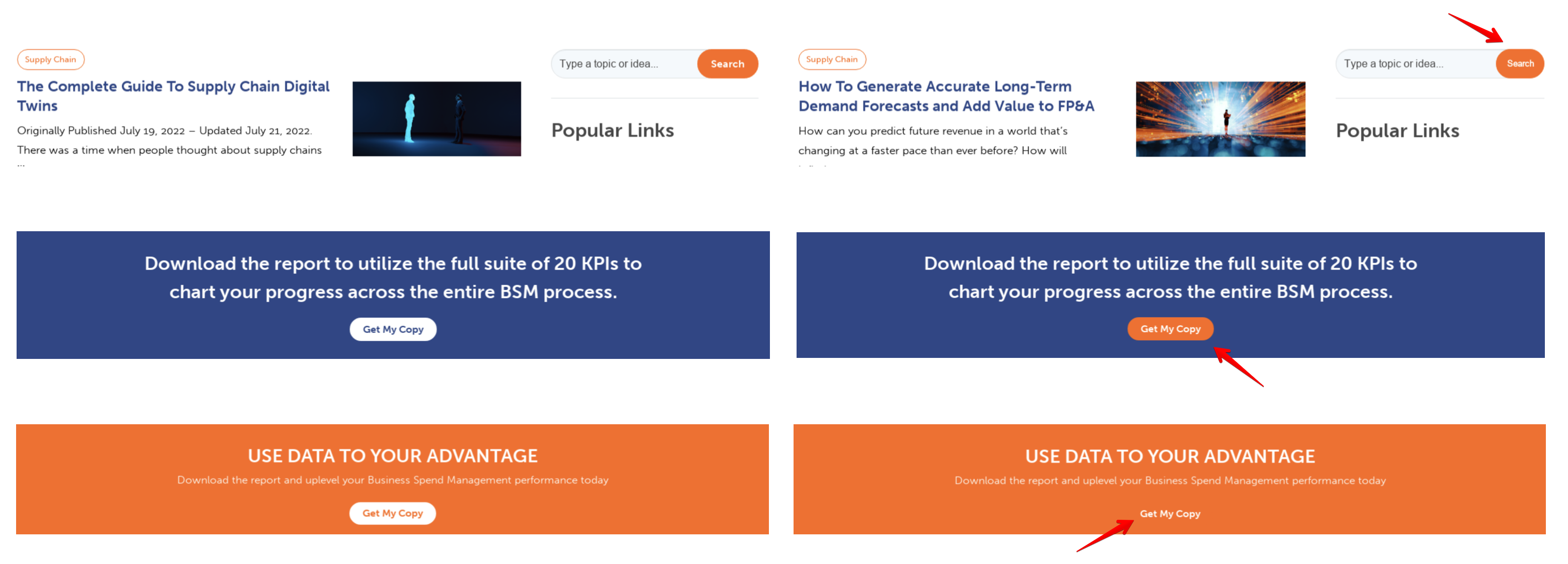

Button Styles

This is a very, very common bug. Unfortunately, it’s also quite hard to catch.

If the bug is just a difference in color (for instance, an orange button on orange background), you can spot it yourself. But sometimes this type of bug is much sneakier.

For example, if you also have a change in content -- which you typically do when you add new buttons, you tend to look at the “big picture” and the button errors remain unseen. In these cases, the font and the button size can change without you even noticing it.

Who will notice it instead? That’s right -- the users.

Even though they can’t pinpoint it and say “here’s a nasty” bug, the UX quality decreases and so does the efficiency of your buttons. Ultimately, it's your bottom line that gets hurt, and all because of a tiny change in button fonts or colors.

A visual regression tool, on the other hand, will spot this unwanted change very fast and alert you of it before it can make any real damage to your website and to your ROI.

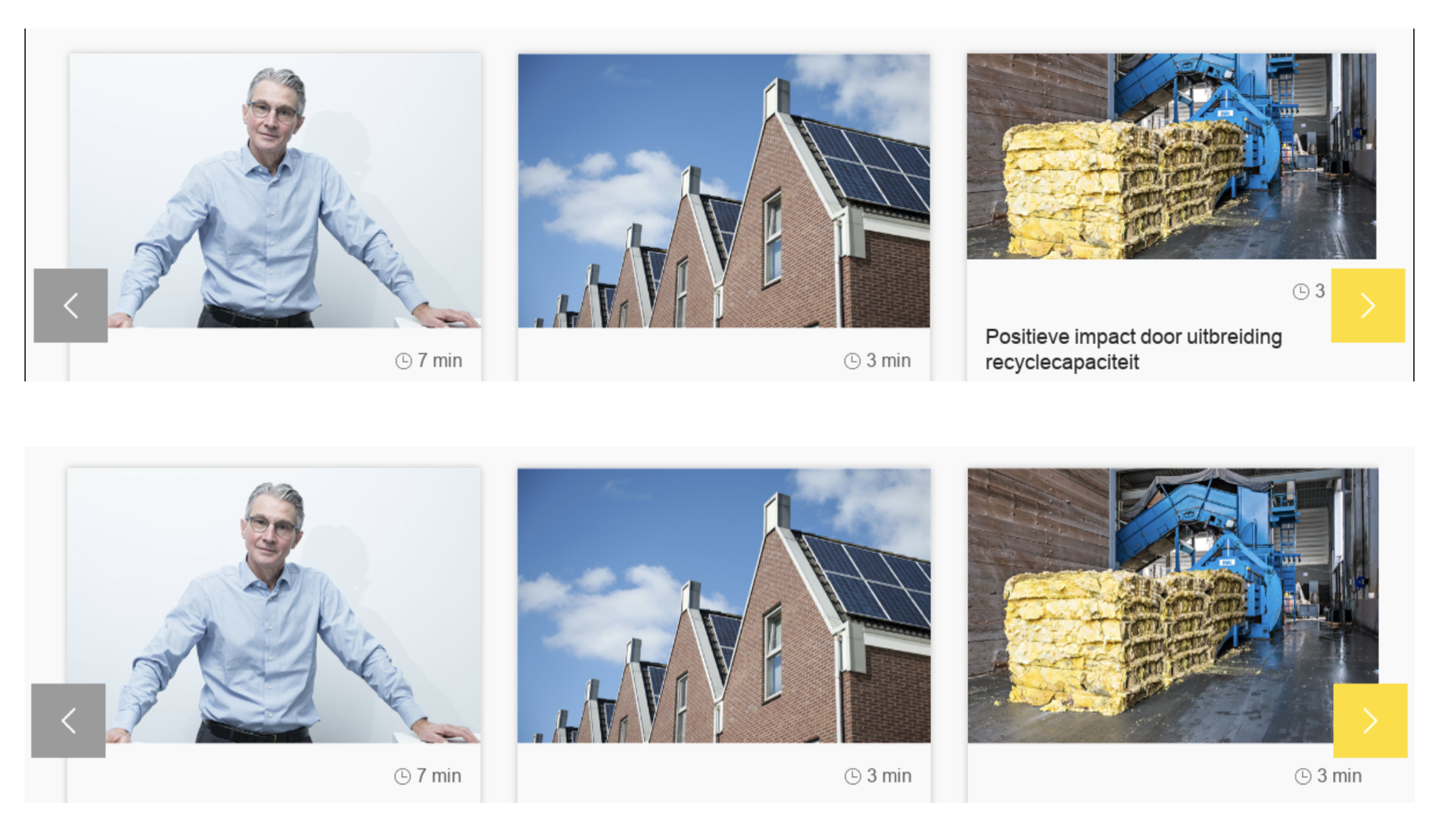

Image Optimization

When you change your hosting provider, update the PHP code, or change the settings of your image optimization process, you will usually end up with some unwanted changes as well. One of the most common errors is blurred images or blurred backgrounds.

In other words, you try to optimize your images to save some bandwidth for your users, but you end up with blurred pictures which harm your UX more than slightly larger images that take an extra millisecond to load.

This type of bug is pretty hard to catch when you simply look at a page, especially if you do so at a break point. On other break points, though, like a desktop, it will be very obvious for the users.

Again, running automated tests is the best solution here. These tests cover multiple browsers and devices, so you can be sure that the images look well whatever your visitors are using.

Font Size

It may seem like a trivial thing, but you’d be surprised at how hard it is to spot inconsistencies in font sizes without an automated testing tool. In fact, this is probably the trickiest bug on this entire list.

When you change the font size of the headings and it’s applied just to some specific elements, inconsistencies are very hard to notice if you’re merely glancing through the website. A few points’ change in the font size is not noticeable to the naked eye, especially if the words with the changed fonts are not right next to each other.

Of course, it’s not a tragedy. It is, however, a regression, and one that harms your UX. Again, even if it’s not noticeable with the naked eye, your visitors will sense that something is a miss. It may not deter them from using your website or buying your products, but if you’re aiming for stellar UX, you need to run automated tests to catch this bug.

Unexpected Font Changes

Yes, these happen. Typically, you set up your fonts in CSS as a family, with one font after the other in case one of them is missing. At first glance, they look alike.

But if for some reason your favorite font cannot be loaded, the code will automatically skip to the next one in the family. This is something a designer might notice -- they typically have a trained eye for this, but a QA person may easily overlook it. It’s even easier to miss this if it’s only applied to certain parts of your website.

On the other hand, for a visual regression testing tool, this is very easy to catch.

If you are interested in trying automated visual regression testing -- welcome to register for an account and give it a try.